Usage-centric Testing part 2: a shift-right approach

In the first part of the “Usage-centric Testing” article, we discussed the problems that come with End-to-End tests as our applications grow: longer feedback loop, irrelevant tests, maintenance complexity, and so on. In this second part, we will see how shift-right testing, and particularly “Usage-centric Testing”, can help overcome these problems.

Read the first part of this article here

Testing in the era of continuous deployment

Before we start, just a quick reminder of the “Usage-centric testing” approach. Usage-centric testing helps design more accurate tests by understanding the user’s behavior and leads to targeted test suites that can improve quality and reduce release cycles. Now we can go ahead and start with the article with the DevOps loops :

DevOps practices accelerated the pace of software delivery dramatically. Some teams can push new improvements multiple times a day in production, so their users can see their tool evolve almost instantly.

Test early, test often, be agile.

One of the enablers of such a speed can be summarized as a single mantra: “Test early, test often”. Furthermore, the ability to ensure software quality continuously during all the phases of development is one of the foundations that makes DevOps possible. Additionally, since we can’t spend days exploratory testing every new release candidate, we rely heavily on automated checks at every stage of the development. With early feedback, the team can quickly fix the problems that may arise before releasing anything, which is far less expensive. Moreover, to get early and frequent feedback, QA is done before and after development. This is what is commonly known as shift-left (Larry Smith in 2001) and shift-right.

Shift left

Shift-left groups all testing activities that happen in the phases of the “Dev” part of the DevOps infinite loop. QA is involved since the early stages of the cycle so that defects can be detected and fixed from the requirements definition itself. TDD (Test-Driven Development) is an example of what shift-left testing is (we write tests before coding). BDD (Behaviour-Driven Development) formalizes user stories into test scenarios, which are automated before any code is written.

Shift right

Shift-right, on the other hand, encompasses all the tests we can perform on our application in its production environment. Here again, we’re aiming to get feedback to continuously improve the quality of our product:

-

Detect production issues as early as possible

Nothing is closer to the production environment than the production itself. Switching from a controlled environment (development or staging) to “real life” can not be as smooth as expected. Monitoring the production environment helps us identify and fix issues before they impact users’ experience. For example, many tools on the market allow teams (testers, engineer, developers…) to detect performance issues, so they can quickly improve them. Canary testing can verify new code integration in production by limiting exposure to select users.

-

Get insights into user preferences

The application in the hands of end-users is the only place where you can get reliable data about their habits and preferences. This is why practices like A/B testing have been democratized. Getting feedback from our product users helps us improve their usability and prioritize further developments. This feedback can also be used as input to better target our testing effort…

Learning from our users’ behavior to optimize our E2E tests

We can benefit a lot from seeking feedback from our application in its production environment. At Gravity, we use “Usage-centric Testing” to improve our test suite by leveraging users’ behavioral data. This allows us to find the perfect balance between velocity and reliability. As we saw in the first part of this article, designing the most suitable End-to-End test suite is more complicated than it looks. We think that informing our testing decisions with what we know about our users’ behavior can greatly help:

-

Improve test coverage

Our tests are based on assumptions about how our users will interact with a feature. However, they may encounter undetected regressions since they won’t behave as anticipated. Learning from production’s common user journeys helps us secure them with new regression tests to keep our users and ourselves happy

-

Clean up End-to-End test suite

We also find great value in learning how our features are used. It is the perfect opportunity to redesign (or even get rid of) End-to-End tests that are checking paths that our users never take. We can focus our test effort on actual behaviors by being data-informed. Shortening the feedback loop with faster test runs and protecting critical user paths against regressions offer us dual benefits.

-

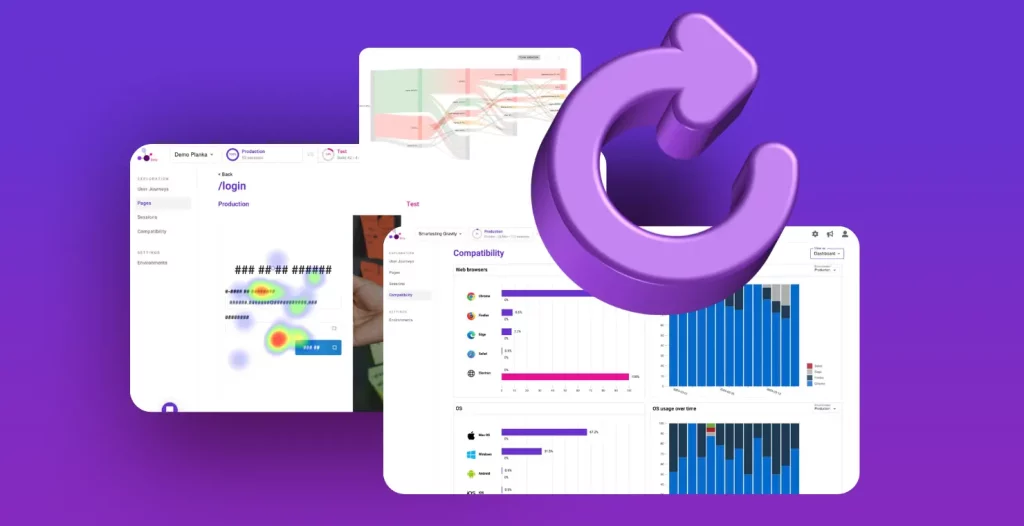

Prioritize environments

Demographic data can also be leveraged. Devices we used to interact with software multiplied up, making testing even harsher. There are a lot of products on the market that make cross-device testing easier (BrowserStack or TestComplete for instance). Knowing users’ common environments helps prioritize tests, shortening the feedback loop.

By getting insights into our users’ most common environments, we can effectively prioritize the test runs for these environments and, again, shorten our feedback loop.

Here are a few examples of how we can benefit from helping our testing decisions with knowledge of the way our users are doing with our products. Now, let’s see how we can concretely do this.

How do we do that?

There are a lot of techniques and testing tools that can help us learn how our product is used.

Usability tests

These tests are a powerful tool in the UX designer toolbox. In short, you provide a task to be done with your application to a potential user, and you just observe how they work it out. They are a treasure trove of learning about how people are dealing with your application. These tests are primarily designed to help improve the usability of your service, but they also can help design new and more accurate automated E2E tests

Web analytics

Unlike usability tests (that are expensive to set up), web analytics tools make it easy to collect product usage quantitative data. With tools like Google Analytics, Amplitude, or MixPanel, you can easily monitor user actions (clicks, business actions, page visits, etc…) and collect demographic information. Products like Hotjar go even further by providing behavior analytics features, with methodologies like heatmaps or session recordings.

Gravity

All the tools we talked about so far are great and indeed help to understand users’ behavior. Their only flaw (in the context of our topic) is that they are not designed to assist with software testing. It is why we build Gravity, which offers functionalities like auto-detection of patterns in users’ behavior, test coverage against product usage, or Cypress script generation, to help you tailor a test suite fitted to your application’s actual usage.

Discover Gravity

Design automated test suites tailored to your product’s actual usage

Book a demo

Conclusion

We hope this article has given you some tips to help you answer this tricky question of “what to test?”. We build software for humans. It is now common to adopt a user-centered design approach to create useful, desirable, and delightful services. As we saw, such an approach can also be beneficial for the efficiency of our delivery process, and to prevent regressions before they impact our customers’ satisfaction.

It is precisely what we are aiming for by developing Gravity as a Usage-centric Testing platform. Do not hesitate to contact us to learn more, or give it a try. We, too, want to deliver the best service possible, and your help would be really helpful in this journey!

Thank you for reading!